Most AI hiring failures do not start with a bad candidate. They start with a company that was not ready to hire.

The role was vaguely defined. The compensation band was based on a guess. The interview process had not been designed. The team had not agreed on who owns the final decision. And then a recruiter or an internal team starts sourcing, candidates start flowing in, and the entire process stalls because nobody built the foundation.

We see this pattern constantly at Tesoro AI. A company comes to us urgently needing a senior ML engineer. But when we ask what the role actually looks like, what the technical bar is, or what success means in 90 days, the answers are not clear yet. That is not a sourcing problem. That is a readiness problem. And the cost of it is measured in months, not days.

Here is what best companies do before they ever open a role.

Define the Role Like an Engineer Would

The single most important thing you can do before opening an AI role is define it with precision. Not a generic job description copied from another company’s careers page. A clear, scoped definition of what this person will actually do.

That means answering specific questions before you write a single line of the job post. What problem does this hire solve for the business in the next 6 to 12 months? Is this an applied ML role, a research role, an MLOps role, or a data engineering role? Those are fundamentally different skillsets. If you are not clear on the distinction, you will attract the wrong candidates and waste weeks discovering it during interviews.

What does the tech stack look like today, and what does the roadmap require? A senior ML engineer who has spent five years in PyTorch is not automatically the right fit for a team building production inference pipelines on Kubernetes. Stack alignment matters more than people assume, especially in AI where the gap between research tooling and production tooling is wide.

What does success look like at 30, 60, and 90 days? If you cannot answer this, the role is not ready to open. Not because you need a rigid plan, but because a candidate who has no clear picture of what they are being hired to deliver will either self-select out or underperform once they arrive.

Set a Compensation Band That Reflects Reality, Not Aspiration

Compensation misalignment kills more AI searches than bad sourcing. Teams either set the band too low and cannot attract qualified candidates, or they have no band at all and waste time on candidates who are outside their budget.

For AI roles, the market is specific. A mid-level ML engineer in the U.S. commands $150K to $200K in total compensation. A senior ML engineer is $200K to $350K depending on location, domain, and whether equity is competitive. For LATAM-based engineers, bands run from $55K to $80K for mid-level, $85K to $130K for senior, and $130K to $160K+ for staff and lead roles. These are not theoretical ranges. They reflect what it takes to close candidates who have real options.

The best practice here is to set the band before sourcing starts and get alignment from every decision-maker in the loop. If the CTO thinks the budget is $130K and the CEO thinks it is $90K, you will discover that conflict during final-round offers. That costs you the candidate and the months of work it took to get there.

A practical rule: if you do not know what the compensation band should be, talk to a recruiting partner who specializes in AI before you open the role. We do this in our first conversation with every client at Tesoro AI, aligning expectations on compensation and scope early to avoid misalignment later. That 30-minute conversation can save you an entire quarter.

Design the Interview Loop Before You Start Sourcing

Here is what we see at companies that hire well: the interview process is designed, documented, and agreed upon before a single candidate enters the pipeline. Here is what we see at companies that struggle: they start interviewing and figure out the process as they go.

A best AI interview loop for a startup is tight and intentional. One deep technical screen focused on applied skills, not trivia. One system design conversation to evaluate how the candidate thinks about real architecture decisions. One collaboration or culture interview to assess how they operate in ambiguity, communicate trade-offs, and work with cross-functional teams. And a fast decision framework with one clear decision-maker.

No trivia rounds. No marathon interview days. No process where seven people need to sign off. If you need 20 interviews to feel confident in a candidate, the process is broken, not the candidate pool.

The documentation piece matters more than people think. Write down the evaluation criteria for each stage before the first interview. What are you testing for? What does a strong answer look like versus a weak one? When interviewers share a rubric, decisions get faster and more consistent. When they do not, every interview becomes subjective, and your team will disagree on candidates for reasons that have nothing to do with the candidate’s actual ability.

Clarify Who Owns the Hiring Decision

Decision-making ambiguity is one of the top reasons AI hiring processes stall. The CTO likes a candidate. The VP of Engineering is not sure. The CEO wants to meet everyone. The HR lead is managing expectations from three directions at once. Weeks pass. The candidate takes another offer.

Before you open a role, answer one question: who has the final say? In the most effective hiring processes we work with, there is one decision-maker. That person owns the technical bar, the culture call, and the final yes or no. Everyone else provides input, but the decision does not require consensus from the entire leadership team.

This is not about excluding people from the process. It is about velocity. Every additional approval layer adds days. In the AI talent market, where top candidates are off the market in two to three weeks, days matter.

Build Your Employer Story Before You Need It

Candidates need to know why the opportunity is compelling. This is true for every hire, but it is especially true for AI roles where engineers have options and are evaluating you as much as you are evaluating them.

Before you start sourcing, make sure you can clearly articulate three things. First, what is the mission of the company and how does this role contribute to it? AI engineers who are drawn to startups tend to be curious, mission-driven people. If you cannot explain why the work matters, you lose them before the first conversation.

Second, what is the growth opportunity? In a startup, an AI engineer exercises judgment every day in ways that do not happen inside larger organizations. They make real architecture decisions, work across the full stack, and see the direct impact of their work. If your company offers that, say it clearly.

Third, what is the team and technical environment like? What infrastructure exists? What will they be building from scratch? What does collaboration look like in practice? The more specific you are, the more you attract people who actually want what you are offering rather than people who are just exploring options.

Clear scope and context lead to stronger applicants and faster hiring cycles. We see this every week. The companies that invest 30 minutes into building their employer story before sourcing starts close candidates faster and lose fewer people in the funnel.

Set Realistic Timeline Expectations

AI hiring does not happen in two weeks. If someone promises you that, they are either flooding you with noise or cutting corners on vetting.

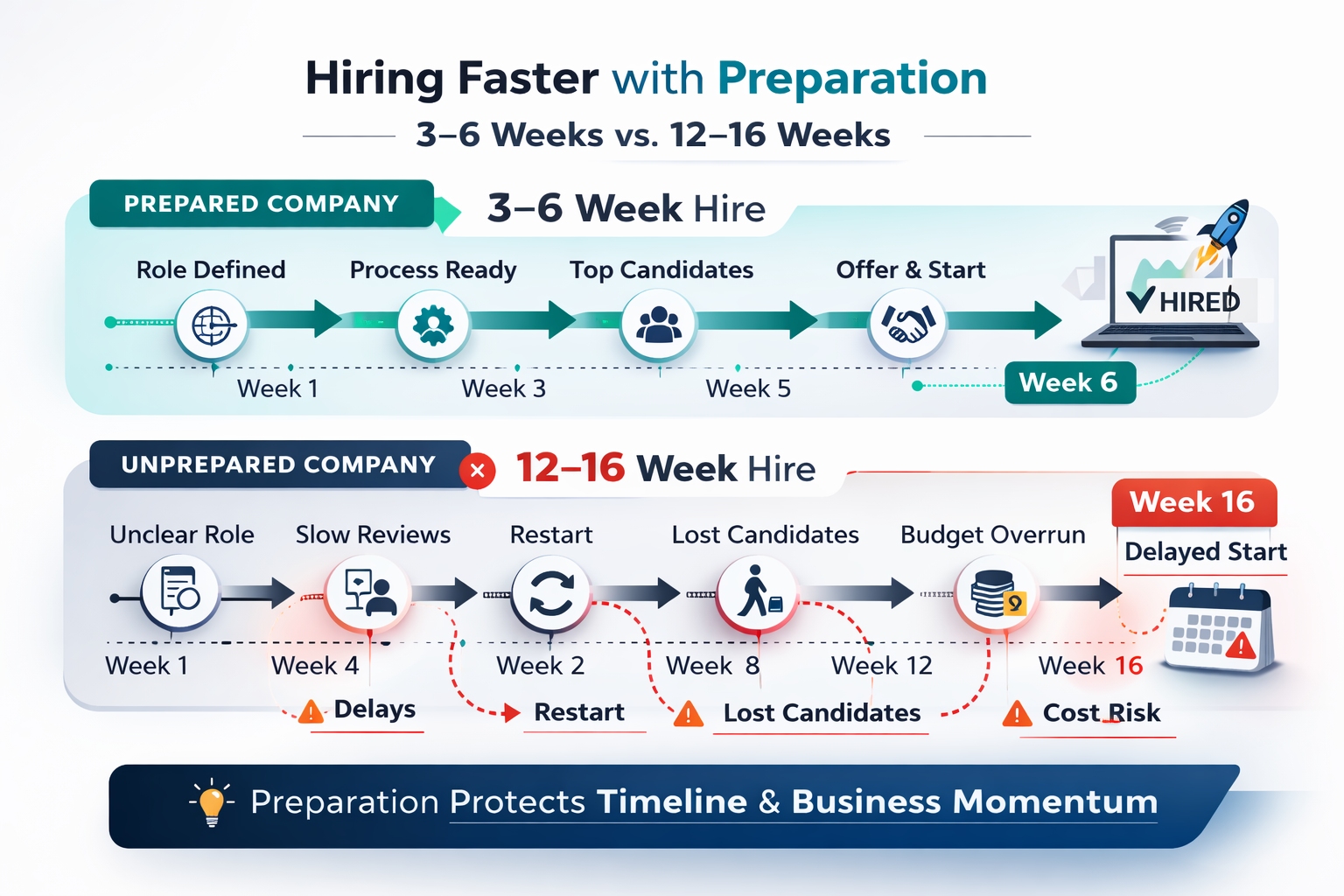

Realistic timelines vary by role. An applied ML engineer typically takes 3 to 6 weeks from kickoff to offer. A senior MLOps hire runs 4 to 8 weeks. A Head of AI or research-heavy ML role can take 8 to 12 weeks. These timelines assume the company is prepared before sourcing begins. If you are still defining the role, building the interview process, or aligning on compensation during the search, add weeks.

The best practice is to set internal timeline expectations before the search kicks off and communicate them to every stakeholder. When the CEO expects a hire in two weeks and the reality is six weeks, frustration builds and bad decisions follow, like rushing a mediocre candidate through because the pressure is too high.

At Tesoro AI, we deliver first candidates in 7 days or less and can build full pods in under 30 days. But that speed is only possible because we work with clients to nail the preparation work upfront. Speed without clarity is just chaos.

Prepare Your Onboarding Before the Offer

This is the step most companies skip entirely. They spend weeks finding and closing the right candidate, and then that person shows up on day one to no documentation, no clear 30-day plan, and no onboarding structure.

For AI roles specifically, onboarding matters more than for most positions. These engineers need access to data, infrastructure, and context about the existing systems before they can contribute. If that access takes two weeks to provision, you have already lost a significant chunk of the value you hired them to deliver.

Before you make the offer, make sure these things are in place: environment access and tooling, a clear first project or set of deliverables for the first 30 days, a designated onboarding buddy or technical point of contact, and documentation on the current state of the codebase, models, and infrastructure. None of this needs to be perfect. It needs to exist.

The companies that onboard AI hires well see faster time-to-productivity and higher retention. The ones that wing it often find their new hire disengaged by month two, wondering if they made the right choice.

The Readiness Checklist: What to Have in Place Before You Open the Role

If you want a practical summary, here is the checklist we walk through with every client before a search begins.

- Role definition: Specific problem the hire solves, role type (applied ML, MLOps, data engineering, research), tech stack requirements, and what success looks like at 30/60/90 days.

- Compensation band: Researched, realistic, and aligned across all stakeholders before sourcing starts.

- Interview process: Stages defined, evaluation criteria documented, interviewers assigned, and a decision-maker identified.

- Employer story: Clear articulation of mission, growth opportunity, team environment, and technical context.

- Timeline: Realistic expectations communicated to leadership, based on the role’s seniority and complexity.

- Onboarding plan: Environment access, first-month deliverables, onboarding buddy, and basic technical documentation ready before the offer is extended.

- Decision-maker: One person who owns the final hiring call, with input from the team but without requiring full-committee consensus.

None of this is complicated. But it does require discipline. The companies that treat preparation as optional end up spending twice as long on the search, losing good candidates to faster competitors, and making compromises they regret.

The companies that do this work upfront hire faster, hire better, and build AI teams that actually deliver on the roadmap.

Not sure if your team is ready to open that AI role? Book a Fit Call and we’ll walk through the readiness checklist together before you spend a dollar on sourcing.